Autonomous vision-based control system for a DJI Tello quadcopter, developed during my exchange semester at Oslo Metropolitan University. The drone detects geometric shapes via its onboard camera and executes corresponding flight manoeuvres — without any human intervention. Built in a team of four over less than three weeks, using exclusively open-source software and consumer-grade hardware.

Goals

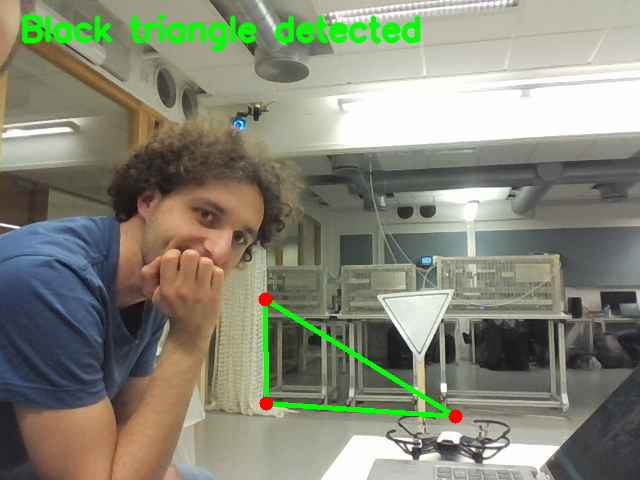

- Detect predefined geometric shapes (triangle, rectangle) in real-time using OpenCV

- Map each detected shape to a specific autonomous flight manoeuvre

- Implement 6-DoF pose estimation to determine the drone’s spatial position relative to the target

- Integrate all modules into a continuous autonomous parkour run

System Architecture

The system follows a perception–estimation–control pipeline:

- Shape Detection — The drone’s camera captures live video. OpenCV processes each frame to identify triangles and rectangles using contour detection and geometric verification.

- Pose Estimation — Once a shape is confirmed, a Perspective-n-Point (PnP) algorithm estimates the 6-DoF pose (position + orientation) of the target relative to the camera.

- Coordinate Transformation — The camera pose is transformed into the drone’s body coordinate system using rotation and translation matrices.

- Flight Control — The computed displacement and yaw correction are sent to the drone via the DJI Tello SDK. The drone aligns itself, then executes the manoeuvre.

Vision Pipeline

Shape detection follows a five-step process:

- Image acquisition — frames captured from the Tello’s onboard camera over UDP

- Preprocessing — grayscale conversion, Gaussian blur, Canny edge detection

- Contour extraction —

cv2.findContourswithRETR_CCOMPto identify shapes with holes (for the rectangular frame) - Polygon approximation —

approxPolyDPto classify contours by vertex count - Geometric verification — internal angles checked to confirm valid triangles (40–100°) and rectangles (≈90° corners)

For the rectangular target, two detection methods were combined — Canny edges and adaptive thresholding — to improve robustness across different lighting conditions.

Pose Estimation

Pose estimation uses OpenCV’s solvePnP function with the known real-world dimensions of the rectangular target. The camera’s intrinsic parameters and distortion coefficients were obtained through a prior chessboard calibration procedure.

The result is a 6-dimensional pose vector [rx, ry, rz, tx, ty, tz] representing the rotation and translation of the target relative to the camera. This is then transformed into the drone’s coordinate frame to compute the required forward, lateral, vertical and yaw corrections.

Results

Shape detection was highly reliable — both shapes were recognised on the first attempt in every test run. Pose estimation showed minor deviations on the horizontal axis (Y), attributed to camera calibration offsets. The flight manoeuvre for the triangle was flawless; the rectangle approach required further tuning for consistent precision.

The complete parkour integration was not tested due to time constraints. Given the hardware limitations (a ~170 CHF drone) and the three-week project duration, the results were considered highly satisfactory.

My Contributions

- Overall code structure and architecture

- Main control loop (

main.py) - Drone control module (

controls.py) — coordinate transformation, alignment logic, flight commands - Pose estimation implementation

- Physical construction of parkour obstacles

- Testing and debugging